Chinese tech giant Huawei is in hot water over a facial recognition patent application, wherein the AI-powered technology might play into the issue of discrimination of China’s ethnic Uighur Muslim population.Video surveillance research group IPVM called out Shenzhen-based Huawei for its 2018 patent application for an “object attribute recognition method, device, computing equipment, and system.” The technology is meant to identify pedestrians by their gender, age, body type, clothing, and race. For the latter, the document delineated between Han and Uighur — the ethnic majority and an ethnic minority in China, respectively:

For example, the target object is a pedestrian. The attributes of the target object can be gender (male, female), age (such as teenagers, middle-aged, old), race (Han, Uyghur), body (fat, thin, standard), top [clothing] style (short sleeve, long sleeve), top color (black, red, blue, green, white, yellow), etc. [From CN109902548A]

Facial recognition technology specifically tuned for Uighur analytics has been incorporated into public surveillance systems across China, IPVM says. The Huawei patent was co-authored by the Chinese Academy of Sciences, the government’s top research arm.

Asked about its involvement, Huawei said the technology is not meant to identify individuals’ race, and that they are working to amend the patent application. In a report by CNN, a spokesperson for Huawei said that the company would amend its patent application, citing that ethnicity identification should not have been part of its patent filing. However, IPVM noted a similar pattern of behavior from Chinese tech companies caught abetting the discrimination of Uighurs.

In the last few years, patents have surfaced from Chinese megacorporations like Alibaba and Baidu, as well as Chinese startups like Megvii and SenseTime, related to Uighur analytics. Upon questioning, each company responded with its stance against racial discrimination.

Megvii told BBC News, which broke the Huawei story with IPVM, that it would “withdraw” its patent application. The facial recognition startup was caught in 2018 developing “Uighur alarms” with Huawei.

Uighurs mainly reside in China’s westernmost province of Xinjiang, which was annexed in 1949 when it was the independent nation of East Turkestan. Anti-terrorism campaigns and religious controls were established to quell separatist sentiment early on, though these have persisted well into the 21st century. The region has seen at least 35 million deaths due to military oppression and famine.

A common issue

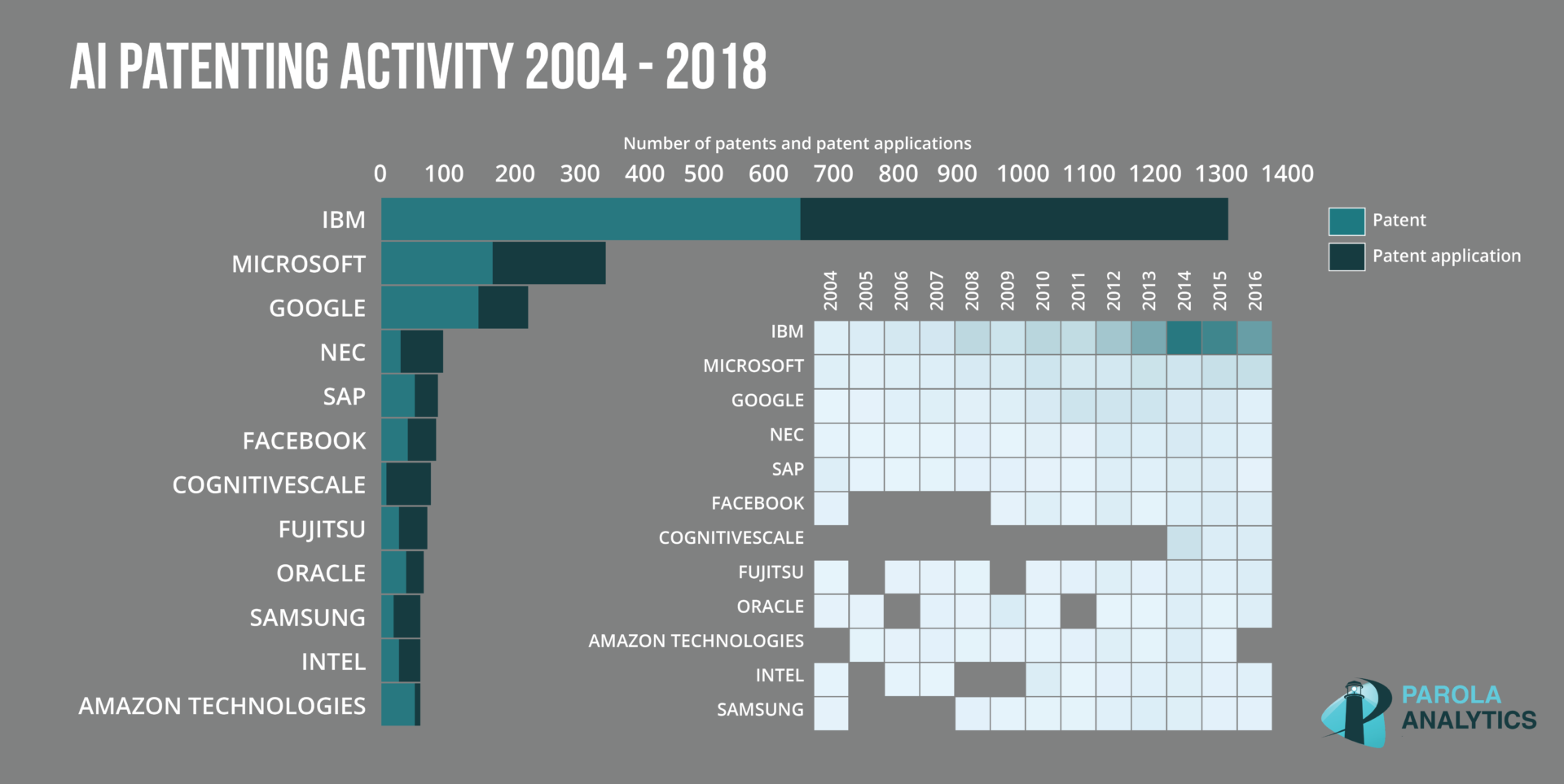

Surveillance overreach enabled by race-identifying technology is a hot-button issue not just in China. In the U.S., patent leader IBM made an abrupt exit from the facial recognition market amid nationwide riots over police brutality in the middle of 2020. In its public letter to Congress, IBM specifically denounced the use of facial recognition technology for racial profiling. It stressed the need for these law enforcement tools’ artificial intelligence to be tested for bias.

Axon, maker of the Taser stun gun, arrived at the same decision about a year earlier. The company abandoned facial recognition, citing ethics concerns, just months after applying for several patents in the space.

In 2019, the National Institute of Standards and Technology published an analysis of facial recognition algorithms across races. Many of the algorithms, which originated from companies like Microsoft and Intel, were found to frequently misidentify non-white faces. A Harvard blog traced the issue to the way AI is trained — in the case of facial recognition, using datasets of faces, which turn out to be predominantly white. This left the AI algorithms with not much to work with for everyone else, and led to wildly uneven performance seen ostensibly as racial bias.

As China quietly pushes through with facial recognition for its public surveillance systems, and the U.S. grapples with its ethical implications, similar conversations must be had in every other country, for the sake of people’s safety and privacy in the near future.