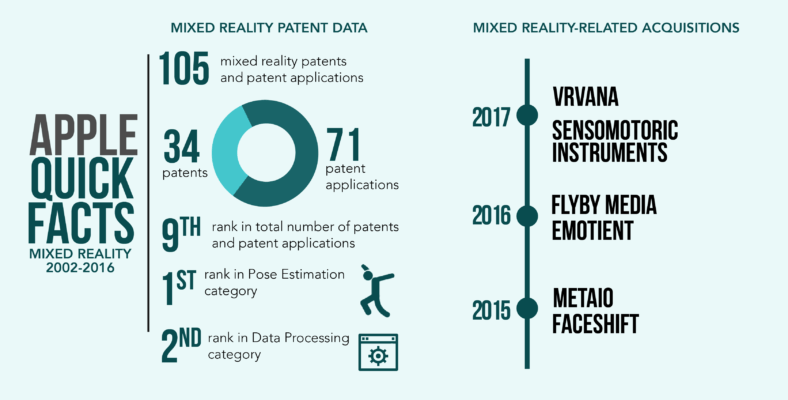

A patent application from biopharmaceutical giant AbbVie’s Allergan features ways to conduct clinical vision assessments using a virtual reality (VR) headset.

The invention is aimed at helping diagnose people with inherited retinal diseases, such as Leber congenital amaurosis (LCA) or retinitis pigmentosa (RP). Both affect the retina, the light-sensitive tissue at the back of the eye. LCA is characterized by increased sensitivity to light, involuntary eye movements, and extreme farsightedness. RP symptoms include difficulty seeing at night and a loss of peripheral vision. Both of these disorders manifest in childhood and are known to progressively worsen with time.

Allergan says tests exist for the purpose of measuring retinal disease patients’ functional vision, like the Multi-luminance Mobility Test (MLMT). It involves checking how fast and how well a person can go through an obstacle course at different levels of environmental illumination. Problem is, physical navigation courses require large dedicated spaces, time-consuming illuminance calibration, time and labor for reconfiguring, as well as manual, subjective scoring.

Allergan’s VR implementation is expected to eliminate these disadvantages by digitizing the navigation course, automating its scoring, and introducing tests that even a standardized approach like MLMT does not include.

![Fig. 1. [Left] A perspective view of one virtual room in a navigation course according to a preferred embodiment of the invention. [Right] The virtual room as displayed on a VR headset. A removable virtual obstacle, in this case, a toy xylophone [402], appears in the user’s path.](https://parolaanalytics.com/wp-content/uploads/2021/09/virtual-room-in-a-navigation.jpg)

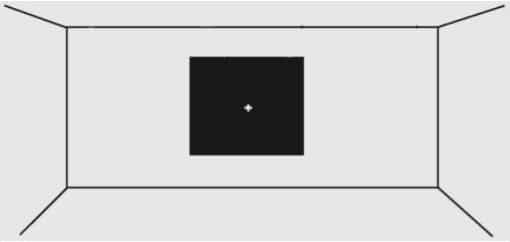

Fig. 1. [Left] A perspective view of one virtual room in a navigation course according to a preferred embodiment of the invention. [Right] The virtual room as displayed on a VR headset. A removable virtual obstacle, in this case, a toy xylophone [402], appears in the user’s path.

The navigation course includes multiple virtual rooms that a person must traverse at different luminance levels. The rooms would feature virtual furniture of varying heights, as well as removable objects that may randomly appear in the path. Allergan says these objects would be positioned at approximately eye level, possibly floating. The user may remove the obstacle by looking directly at it.

A person’s vision or the efficacy of treatment may be assessed via a number of performance metrics for the navigation course. These include the time it takes for a user to complete a room, the number of instances when they collide with virtual objects, and their performance at varying light levels.

These are the additional tests described in Allergan’s patent application:

![Fig. 2. [Left] An alphanumeric character that may be used in the low vision visual acuity assessment. [Right] An implementation of the test that requires the user to indicate the directionality of the virtual object.](https://parolaanalytics.com/wp-content/uploads/2021/09/alphanumeric-character-that-may-be-used-in-the-low-vision-visual-acuity.jpg)

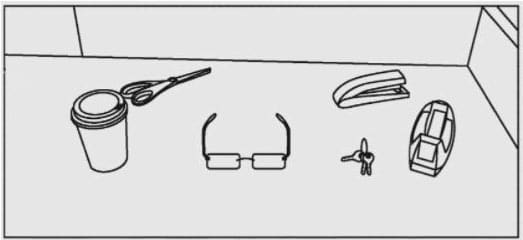

Fig. 2. [Left] An alphanumeric character that may be used in the low vision visual acuity assessment. [Right] An implementation of the test that requires the user to indicate the directionality of the virtual object.

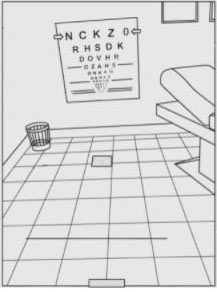

Fig. 3. A virtual eye chart located on a virtual wall. Users are instructed to read one line of the eye chart, and the system measures their progress as they read the alphanumeric characters.

Fig. 4. A small red cross on a black background may comprise an oculomotor instability assessment. It involves a user focusing on a small target while the system tracks the location of the center of the pupil and generates eye-tracking data.

Fig. 5 A virtual scene with a plurality of virtual objects arranged therein is used for an item search assessment. Scenes may be displayed in both well-lit and poorly lit conditions. Users are instructed to select an object within the scene, and the system records whether they make accurate selections.

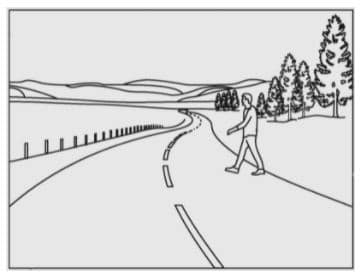

Fig. 6. An example display of a virtual driving course for the user to navigate. The VR environment used in the driving assessment may also be at high and low luminance levels. It may involve tasking the user to drive through a virtual street on a sunny day, or a busy urban area at nighttime. The system may also ask a user to park their vehicle within different scenarios. Performance metrics including the number of collisions are gathered.

The featured patent application, “Systems, Methods, and Computer Program Products for Vision Assessments Using a Virtual Reality Platform”, was filed with the USPTO on February 19, 2021, and published thereafter on August 26, 2021. The listed applicant is Allergan, Inc. The listed inventors are Amber Lewis, Francisco J. Lopez, and Gaurang Patel.