Technology has boomed in the last decade and social media is no exception. Millions of people around the globe use social media as a source of information, often over-sharing what they think others want to hear or see.

Social media sites are now considered a primary source of news because they allow people to share what matters to them–from publishers to original sources. However, this also increases the risk factor due to its nature. People tend to share content without verifying whether it’s true or not.

With the proliferation of fake news, deep fakes, and disinformation, it’s difficult to tell if what you see on your social media feed is factual or not. It’s even more difficult to control it from spreading.

This is the problem that the tech industry faces: a challenge brought on of its own making. In part due to backlash from governments and partly due to ethics, the industry is now seeking to exercise some degree of control over this problem and curb misuse.

What factors caused disinformation to spread

Several conditions made fake news easy to manipulate and spread. This report notes some of the major ones:

- Algorithms relied on data and learning to provide personalized content on social platforms, whether or not the content was valid or factual.

- Specific targeting allowed content to be seen by specific sets of people, taking advantage of the large amounts of data that platforms had on their users.

- AI and bot content utilized by publishers in an effort to produce content quickly was not moderated, creating artificial and questionable interest in organic stories.

- Moderation algorithms relying on AI were not stringent or able to make better decisions on content that may have been misleading.

In the United States, many attempts at disinformation and fake news were made around the time of the 2016 US Presidential elections.

The 2016 US election was highly disputed due to rampant misinformation. A study by Stanford University that looked at Facebook estimated that the average U.S. adult read and remembered one or more fake news during the 2016 election period. They also found that users had higher exposure to pro-Trump vs pro-Clinton articles.

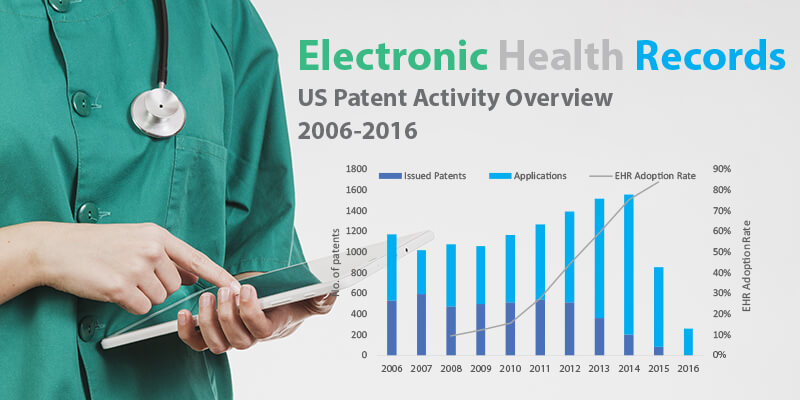

This report from Parola Analytics notes that patenting activity on technologies used to detect disinformation and fake news started gaining momentum that same year, as social media usage had increased tenfold in the decade leading up to then.

Similar attempts at malicious manipulation of news have been observed in major global events since then, including COVID hoaxes, and violence in India.

How tech is solving the disinformation problem

Tech is now doubling down on the proliferation of fake and harmful content online by implementing filters and software to detect and flag these. It is also exploring the use of artificial intelligence tools and algorithms.

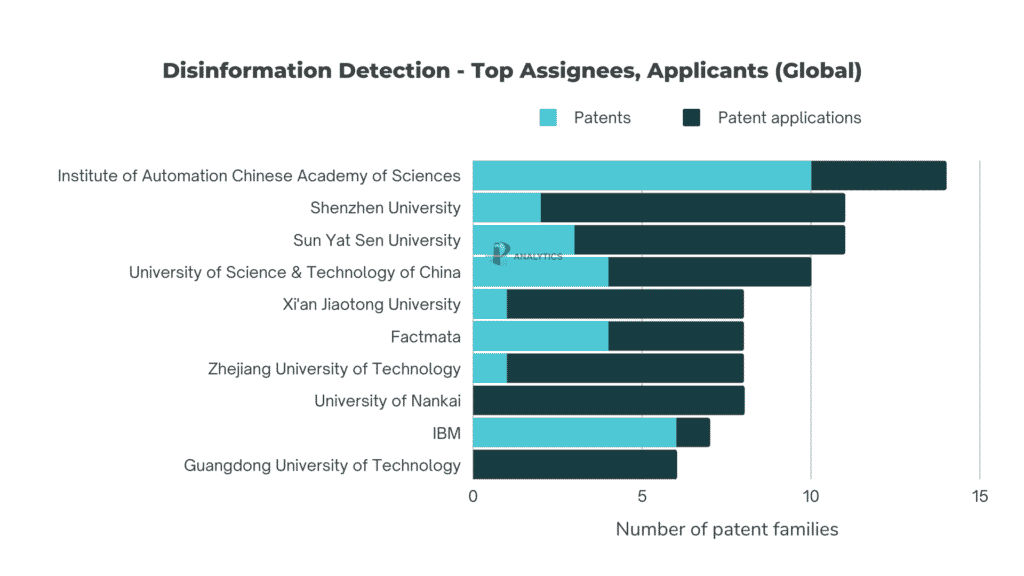

It is widely observed that fact-checking initiatives have come mostly from non-profit organizations. However, based on a Parola Analytics report, in terms of patenting these technologies and methods, they have very little presence. According to the report, it is because these organizations are less inclined to patent their methods.

There is also little activity from media outlets. This is because the goals and incentives of corporate or commercial organizations are different from those of independent, non-profit fact-checking enterprises.

Fig 1. Top assignees and applicants worldwide for detecting disinformation. (Source)

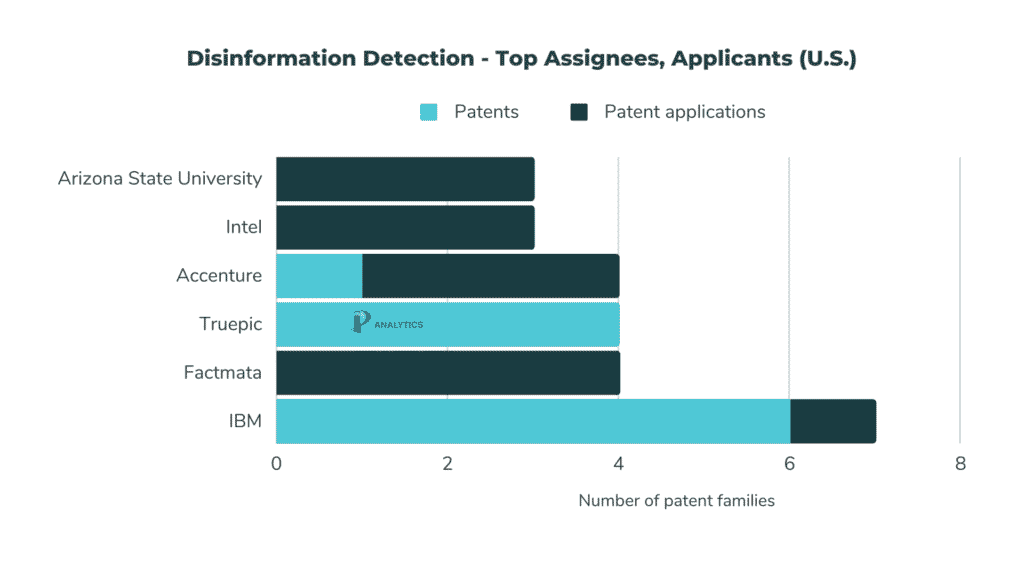

Looking at the global patenting activity for detecting disinformation, IBM is the only major player and one of the top US entities. Factmata, a startup, is narrowly ahead.

In 2016, IBM released a beta fact-checker app that could be added to a browser and give an evaluation of whether its contents were true. However, a few months after the release, they pulled it out because, according to a senior software engineer at IBM Watson Research, “we got a lot of feedback that people did not want to be told what was true or not”.

IBM continues to work on its fact-checking technologies. Meanwhile, Factmata has filed patents for tools to track manipulated narratives or propaganda online.

Unlike China, U.S. patents and patent applications mostly come from corporations, and not the academe.

Fig. 2. Disinformation detection, top U.S. applicants and assignees. (2012-2021)

Further analysis from the report shows that U.S. patents in this space are more concentrated on fact-checking or the verification of text-based information.

Patents in the U.S. are more concentrated on fact-checking or the verification of text-based information.

There is a resounding call from legislators and society for better regulation of social media to avoid the spread of disinformation and fake news. The tech industry is under pressure to police its own platforms and provide meaningful solutions sooner rather than later.

Read our latest mini patent landscape report on the global snapshot of patents addressing disinformation, deep fakes, and fact-checking.